![The ‘Giveaway Piggy Back Scam’ In Full Swing [2022]](https://www.cjco.com.au/wp-content/uploads/pexels-nataliya-vaitkevich-7172791-1-scaled-2-683x1024.jpg)

Revolutionizing AI: pioneering research blends artificial neural networks with neurobiology

As Seen On

Artificial neural networks have been a staple of machine learning for years, with a structure mirroring the information-processing methods of biological neurons in our brains. These connections allow machines to be trained to perform tasks by considering examples, generally without programming with specific task rules. This resemblance to the human brain’s functioning has sown seeds of curiosity among experts around the globe.

Transforming this technological landscape is a significant player named Transformers, a unique kind of AI architecture that has had an impressive impact on the world of machine learning. Renowned AI frameworks such as ChatGPT and Bard, known for mimicking human-like conversation and writing, respectively, have incorporated Transformers in their systems to revolutionize user experience.

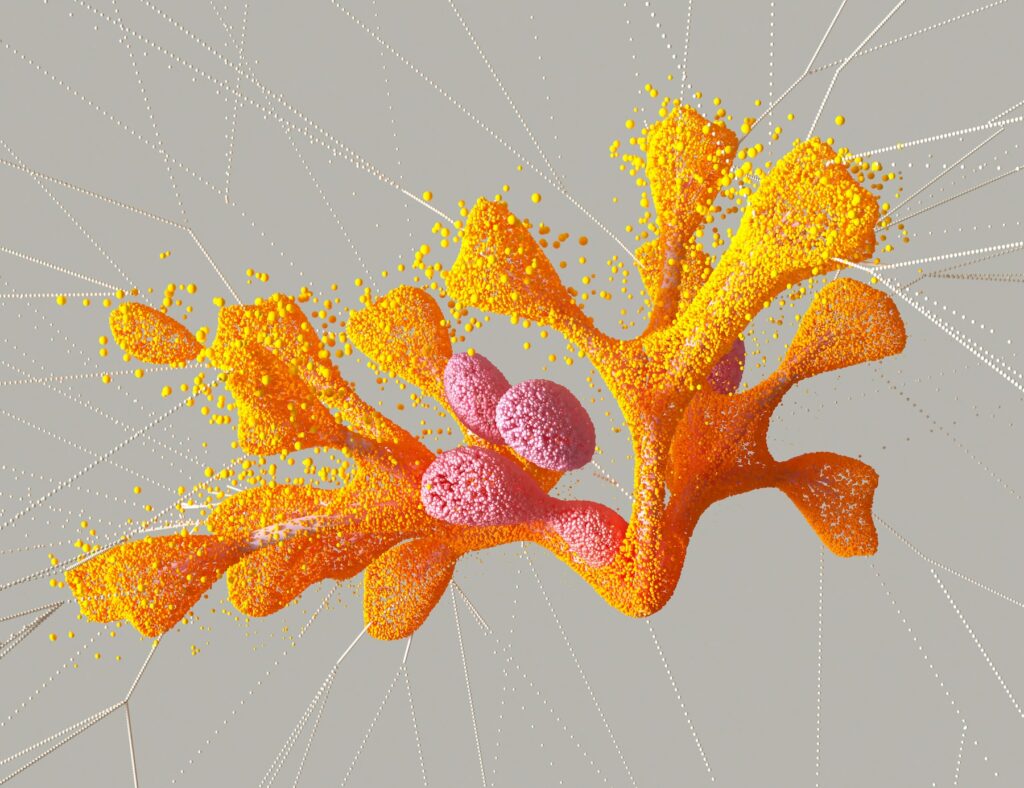

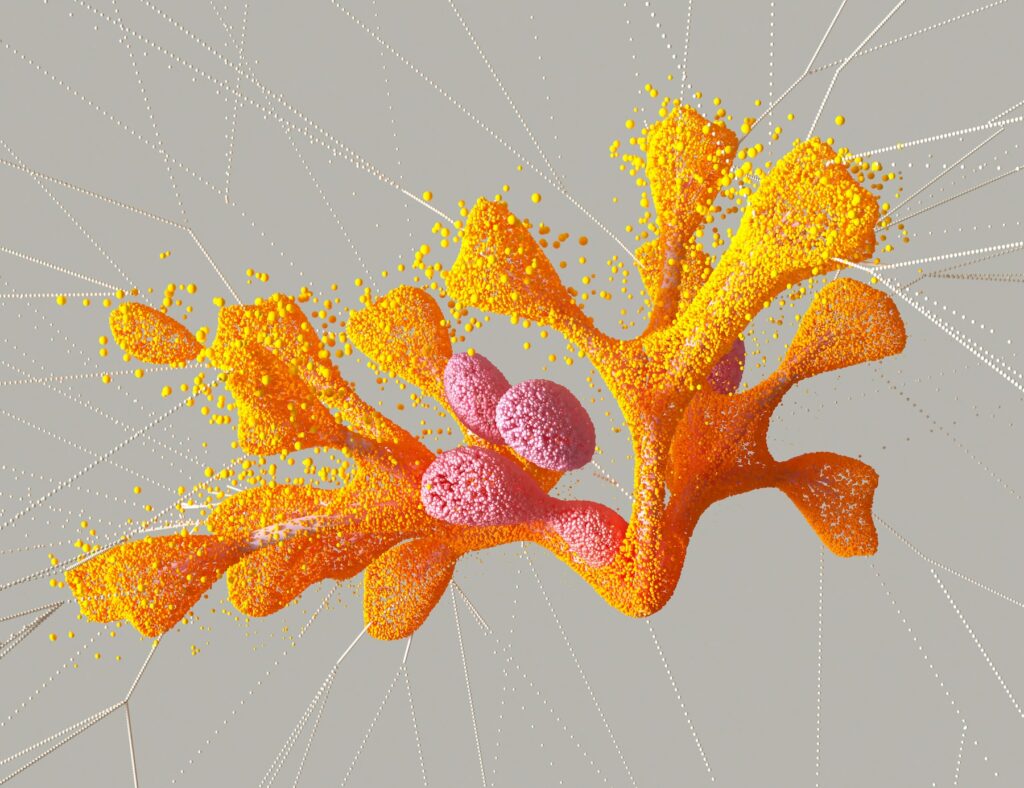

A recent research collaboration between the Massachusetts Institute of Technology (MIT), the MIT-IBM Watson AI Lab, and Harvard Medical School took this concept a notch higher, proposing to construct a Transformer using biological components in the brain. The idea was to develop an AI approach that better emulates the brain’s structure and functionality.

The researchers focused on the role of astrocytes in the brain, a kind of glial cell that interacts with neurons. Unlike other Transformer models, the research introduces an accurate mathematical framework that represents these interactions, thus enriching our understanding of artificial models.

The shared weight models that the researchers proposed incorporate Transformers within a biological context—astrocytic and non-astrocytic approaches. This dual-approach brings us a step closer to modelling neuronal responses more accurately.

Another novel term you would come across in this research is tripartite synapses. In essence, it refers to the shared interaction between a neuron, a synaptic terminal, and an astrocyte. Their mutual communication can be integral to the ‘self-attention’ mechanism within a Transformer model, representing the neuron’s ability to focus energy and resources on functions that contribute to learning and memory.

The researchers’ ambitious project propelled them to use the mathematical components of a Transformer to build biophysical models, effectively demonstrating neuron-astrocyte interactions and ultimately blending neuroscience with AI.

This pioneering piece of research, especially the neuron-astrocyte network equation, holds tremendous potential to illuminate our understanding of the brain’s structure and deepen AI development. Bridging AI’s gap with neurobiology could enhance machine learning models’ ability to learn, reason and self-correct.

Not only does this investigation revolutionize the realm of AI and neuroscience, but it also opens up avenues for progress in many associated fields. This approach of mirroring human brain function to develop machine capabilities offers academia and industry alike with unparalleled potential for human-like AI while also teaching us more about ourselves in the process.

In conclusion, this intersection between biological and artificial neural networks may just be scratching the surface of a new chapter in our AI journey. The researchers themselves acknowledged that this is merely step one on the path to understanding the complex dynamics of the brain and integrating that comprehension into artificial neural networks. Only time will tell the breadth of the evolution that awaits.

Casey Jones

Up until working with Casey, we had only had poor to mediocre experiences outsourcing work to agencies. Casey & the team at CJ&CO are the exception to the rule.

Communication was beyond great, his understanding of our vision was phenomenal, and instead of needing babysitting like the other agencies we worked with, he was not only completely dependable but also gave us sound suggestions on how to get better results, at the risk of us not needing him for the initial job we requested (absolute gem).

This has truly been the first time we worked with someone outside of our business that quickly grasped our vision, and that I could completely forget about and would still deliver above expectations.

I honestly can't wait to work in many more projects together!

Disclaimer

*The information this blog provides is for general informational purposes only and is not intended as financial or professional advice. The information may not reflect current developments and may be changed or updated without notice. Any opinions expressed on this blog are the author’s own and do not necessarily reflect the views of the author’s employer or any other organization. You should not act or rely on any information contained in this blog without first seeking the advice of a professional. No representation or warranty, express or implied, is made as to the accuracy or completeness of the information contained in this blog. The author and affiliated parties assume no liability for any errors or omissions.